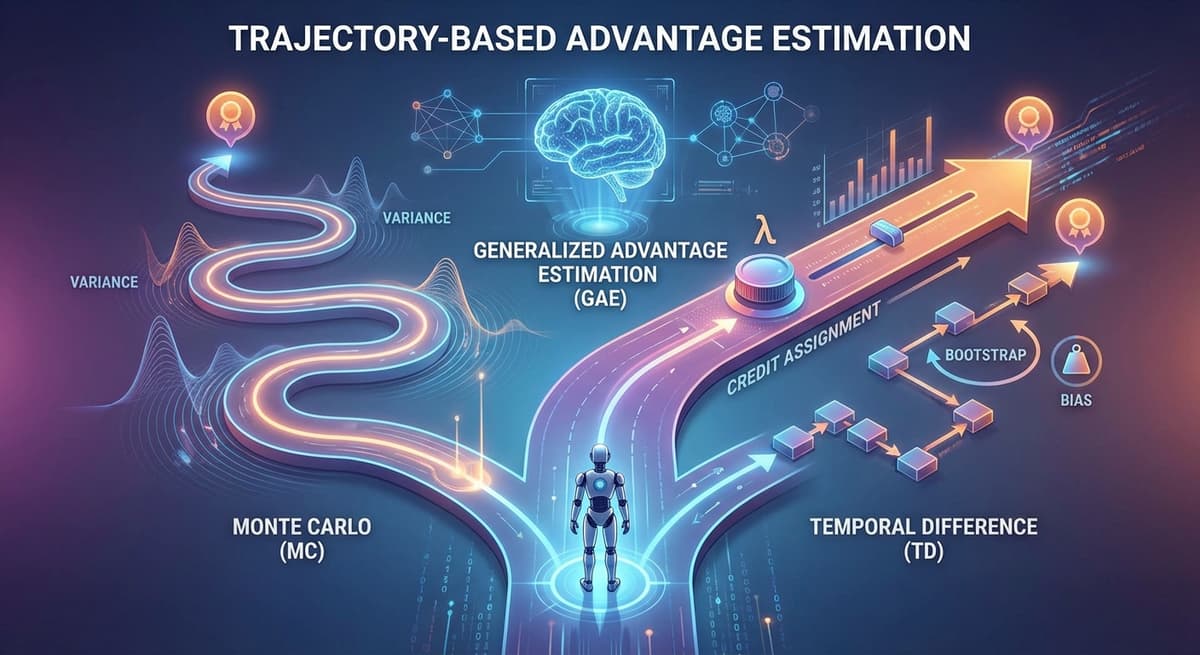

Reinforcement learning is fundamentally about learning from interaction — an agent explores an environment, receives rewards, and improves its behavior over time. At the heart of this process lies a central challenge: credit assignment. When an agent receives a reward, which of its past actions deserve credit or blame for producing it? Policy gradient methods address this through the advantage function, which measures how much better (or worse) a chosen action was compared to the agent's typical behavior in that state:

A(s,a) = Q(s,a) − V(s)

where Q(s,a) represents the expected return after taking action a in state s, and V(s) is the expected return from that state under the current policy. In practice, neither Q nor V is directly accessible — we only observe sampled trajectories of states, actions and rewards. All three methods covered in this post — Monte Carlo, Temporal Difference, and GAE — are trajectory-based: they estimate the advantage from sequences of observed experience rather than from a model of the environment. This distinction matters especially in LLM fine-tuning, where algorithms like REINFORCE, GRPO, DAPO, and PPO all rely on one of these estimators to extract learning signals from generated outputs.

The choice between them reflects the bias–variance tradeoff, one of the most fundamental tensions in reinforcement learning. An unbiased estimator may be too noisy to learn from, while a low-variance estimator may push learning in the wrong direction. Understanding this tradeoff is essential for choosing the right estimator for a given task.

Monte Carlo: Unbiased but High Variance

Monte Carlo (MC) estimation is the most conceptually pure approach to advantage estimation: run the episode to completion and use the actual observed return as the estimate of value. There is no bootstrapping, no reliance on intermediate predictions — just raw experience. Formally, the return at timestep t is computed as

Gₜ = Σ(k=0 to T-t) γᵏ · rₜ₊ₖ

the discounted sum of all future rewards until the terminal timestep T. The advantage is then estimated as Aₜ = Gₜ - V(sₜ), where V(sₜ) acts as a baseline to reduce variance. Since E[Gₜ] = Q(sₜ, aₜ), this produces an unbiased estimate — not because the value function is perfect, but because the return itself is directly observed from the environment.

This reliance on real outcomes is both MC's greatest strength and its biggest weakness. It avoids approximation errors entirely, converging exactly to the true action values under the policy. But the return Gₜ is a sum of many stochastic rewards, and its variance grows with trajectory length. Even for identical state-action pairs, small randomness can produce drastically different returns, making individual estimates highly noisy. This becomes particularly problematic in long-horizon or sparse-reward settings.

A deeper limitation arises in credit assignment. Because the final reward is propagated backward across the entire trajectory, early actions receive the same high-variance signal as later ones. An action taken at the beginning of an episode may be judged based on outcomes hundreds of steps later, making it difficult for the agent to identify which decisions truly mattered.

Where MC Still Dominates

Despite these limitations, Monte Carlo methods remain highly relevant — particularly when episodes are short, rewards are sparse and terminal, or when bootstrapping would propagate weak signals. This is especially evident in modern LLM alignment, where an entire generated response is treated as a trajectory and a single terminal reward (such as correctness) is propagated backward. Algorithms like REINFORCE, GRPO and DAPO all build on this idea, using MC-style returns while introducing techniques to reduce variance without relying on value functions.

Temporal Difference: Low Variance at the Cost of Bias

Temporal Difference (TD) learning takes a fundamentally different approach: instead of waiting for the full trajectory, it updates estimates using immediate feedback and a learned prediction of the future. The TD error is defined as

δₜ = rₜ + γ · V(sₜ₊₁) − V(sₜ)

where rₜ is the immediate reward and V(sₜ₊₁) is the model's estimate of future value. This quantity δₜ serves as a one-step approximation of the advantage: Aₜ ≈ δₜ. Because it depends only on a single reward and a value function output, TD exhibits significantly lower variance than MC, making it more stable and sample-efficient.

The Bias Problem

However, this efficiency introduces bias. The bootstrapped target rₜ + γ · V(sₜ₊₁) is only accurate if the value function is correct, but in practice V is a learned neural network that may be poorly estimated, especially early in training. These errors propagate across updates, leading to systematically biased advantage estimates. This becomes more pronounced in long-horizon tasks, where one-step TD only captures immediate consequences and relies heavily on V(sₜ₊₁) to summarize distant outcomes.

Multi-Step TD

To address this, multi-step TD methods extend the idea by looking ahead multiple steps before bootstrapping:

Gₜ⁽ⁿ⁾ = Σ(k=0 to n-1) γᵏ · rₜ₊ₖ + γⁿ · V(sₜ₊ₙ)

This interpolates between one-step TD (low variance, high bias) and Monte Carlo (high variance, low bias). In this sense, TD learning occupies the opposite end of the spectrum from MC — trading unbiased accuracy for efficiency and stability, and forming the foundation for more advanced estimators like GAE.

GAE: Tunable Balance Between Bias and Variance

Generalized Advantage Estimation (GAE) provides an elegant resolution to the tension between MC and TD. Instead of committing to either extreme, GAE constructs a continuous spectrum of estimators by expressing the advantage as a weighted sum of TD errors across multiple future steps, where the weights decay exponentially:

Aₜᴳᴬᴱ = Σ(l=0 to ∞) (γλ)ˡ · δₜ₊ₗ

where each δₜ₊ₗ is a TD error. In practice, this is computed efficiently using a simple backward recursion over the trajectory. The key insight: instead of trusting a single estimate, GAE aggregates information across multiple time horizons, blending immediate feedback with longer-term signals.

The Role of λ

The parameter λ controls the balance between bias and variance:

- λ = 0 → reduces to one-step TD (low variance, higher bias)

- λ = 1 → equivalent to Monte Carlo (zero bias, high variance)

- λ ≈ 0.95 → empirically robust default across a wide range of tasks

This allows practitioners to tune the estimator based on the reliability of the value function and the stochasticity of the environment.

Improved Credit Assignment

Unlike Monte Carlo, where all actions are judged by the same final outcome, GAE assigns progressively decreasing importance to distant future signals. The advantage at timestep t is influenced most strongly by the immediate TD error δₜ, and less so by δₜ₊₁, δₜ₊₂ and so on. This creates a more structured and localized learning signal, enabling the agent to better identify which actions contributed to which outcomes — while still capturing longer-term dependencies that one-step TD would miss.

Limitations of GAE

GAE is not without weaknesses:

- Temporal decay — the exponential weighting means rewards far in the future contribute very little to the current advantage estimate. In long-horizon tasks, this can lead to vanishing learning signals for early actions

- Compounding bias — because each term depends on the value function, bias can accumulate across many steps, particularly in long sequences like reasoning-heavy tasks

- Dependency on V quality — if the value function is poorly learned (e.g., early in training), lower λ amplifies bias from bad estimates while higher λ introduces MC-level variance

This is one reason why some modern LLM alignment methods prefer pure Monte Carlo returns despite their variance — they avoid the compounded bias introduced by repeated bootstrapping.

In practice, GAE has become a default choice in policy gradient methods like PPO and RLHF-based training systems, offering a principled and computationally efficient way to balance bias and variance while improving credit assignment.

Choosing the Right Estimator

| Monte Carlo | TD Bootstrapping | GAE | |

|---|---|---|---|

| Bias | None | High (depends on V) | Tunable via λ |

| Variance | High | Low | Medium |

| Needs critic? | No | Yes | Yes |

| Best for | Sparse/terminal rewards, short episodes | Dense rewards, online updates | PPO, moderate trajectories |

| LLM relevance | GRPO, DAPO, REINFORCE | Rarely used directly | PPO-based RLHF |

Use Monte Carlo When

- Rewards are sparse and terminal. If the only meaningful signal arrives at the end of an episode (correct/incorrect answer, win/loss), MC avoids the issue of TD needing a good V to propagate that signal backward.

- Trajectory length is short or fixed. MC variance scales with trajectory length — for episodes under ~100 steps, it is often tolerable with a good baseline.

- No critic model is desirable. When training a separate value network is infeasible, MC with a sample-based baseline (as in GRPO) is an effective alternative.

- The task has rule-based, verifiable rewards. Mathematical reasoning, coding, and logic puzzles with deterministic correctness checks are ideal because reward noise is eliminated.

Use TD Bootstrapping When

- Online updates are required. If the system must update its policy without waiting for episode completion (e.g., continuous tasks, real-time control), TD is the only viable option.

- Dense step-level rewards are available. When every transition provides a meaningful reward signal, TD uses that information efficiently without MC's variance accumulation.

- Value function can be accurately estimated. In low-dimensional, stationary environments where V converges quickly, TD's bias is small and its variance advantage makes it superior.

Use GAE When

- Running PPO. GAE is the standard choice for PPO. Use λ = 0.95 as a starting point.

- Moderate trajectory lengths (10–500 steps). GAE works best when trajectories are long enough that one-step TD misses important context, but short enough that exponential decay doesn't eliminate future reward information.

- A reliable value function is available. GAE's advantage over MC is only realized when V is good enough to make bootstrapped TD errors informative. Early in training with a random V, high λ (near-MC) may be better.

- Training stability is a priority. GAE's moderate variance provides more stable gradient estimates than pure MC, reducing the risk of large policy update steps.

Key Takeaways

- All three methods are trajectory-based — they estimate the advantage from observed sequences of experience, not from environment models. The difference lies in how much of the trajectory they use and how they handle the bias-variance tradeoff.

- Monte Carlo is unbiased but noisy. It works best when episodes are short, rewards are terminal, and no critic is available — which is exactly the setup in GRPO and DAPO for LLM fine-tuning.

- TD is efficient but biased. Its value depends entirely on the quality of the learned value function. Poor V estimates early in training can systematically mislead policy learning.

- GAE is the practical default. With λ ≈ 0.95, it balances bias and variance well enough for most PPO-based systems, including RLHF pipelines.

- There is no universally best estimator. The right choice depends on reward structure, trajectory length, value function quality, and whether a critic model is feasible. Understanding the tradeoffs is more important than memorizing which to use.