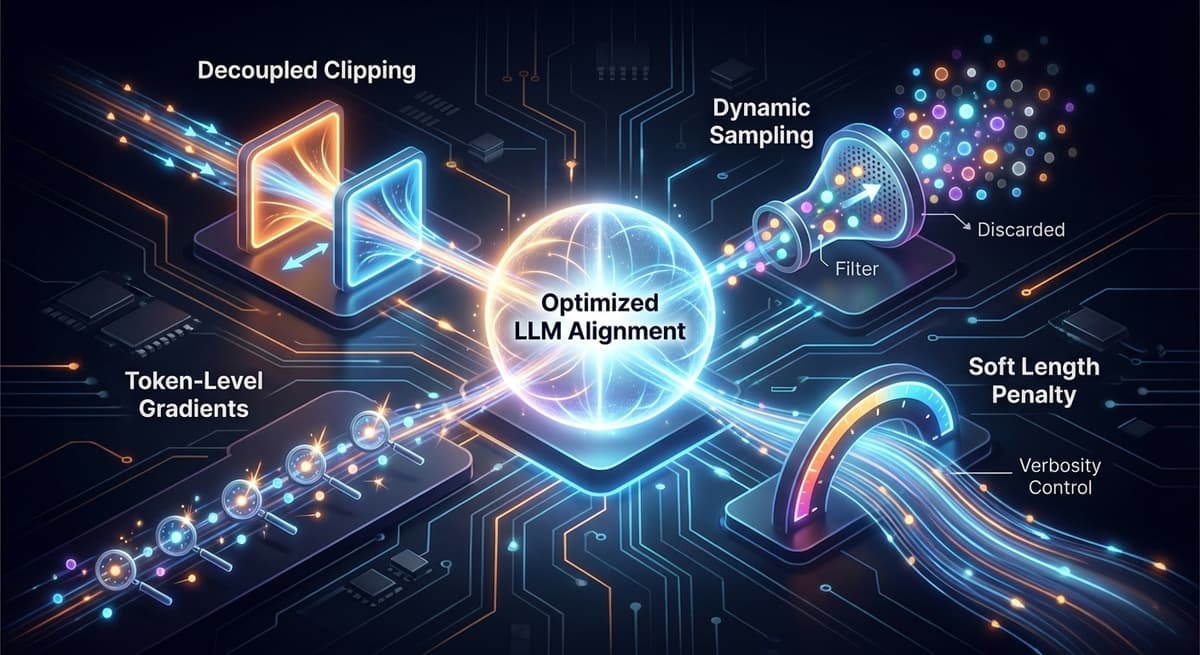

Fine-tuning Large Language Models (LLMs) using reinforcement learning has evolved rapidly with methods like GRPO (Group Relative Policy Optimization) becoming widely adopted. However, as these models are increasingly applied to complex reasoning and alignment tasks, limitations in their stability, efficiency and learning signal quality have become more apparent. Decoupled Clip and Dynamic Sampling Policy Optimization (DAPO) emerges as a refined approach that addresses these shortcomings through four core improvements:

- Decoupled clipping — asymmetric bounds for positive and negative updates

- Dynamic sampling — filtering out uninformative batches

- Token-level policy gradients — precise credit assignment per token

- Soft length penalty — controlling verbosity without hard cutoffs

Where GRPO Falls Short

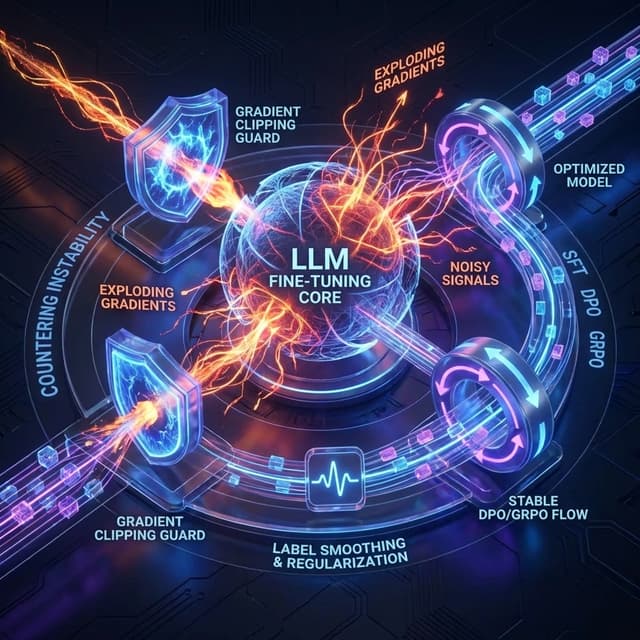

DAPO is a reinforcement learning–based optimization algorithm built on top of the VERL (Volcano Engine RL) framework, designed as an advancement over GRPO — particularly in tasks such as reasoning, writing, and alignment where reward signals tend to be noisy, subjective or ambiguous. While GRPO introduced a simpler alternative to traditional PPO-style methods by removing the need for a critic model, it struggles in these complex scenarios due to several key limitations:

- Zero-gradient batches — when all sampled responses receive similar rewards, normalization eliminates meaningful differences, resulting in no effective learning

- Symmetric clipping — uniform PPO-style clipping restricts both positive and negative updates equally, limiting the model's ability to strongly reinforce high-quality outputs

- No filtering of uninformative samples — all prompts are treated as equally valuable, wasting computation on low-signal batches

- Coarse credit assignment — sequence-level rewards cannot distinguish which tokens or reasoning steps drove the outcome

These issues are further amplified in subjective tasks, where reward distributions are less distinct. DAPO addresses each of these through its four core innovations.

How DAPO Improves on GRPO

Decoupled Clipping: Asymmetric Updates for Better Exploration

GRPO uses a single symmetric clipping range (ε ≈ 0.2) to stabilize policy updates, but this also restricts how much the model can explore different reasoning paths. DAPO introduces a decoupled clipping mechanism with separate bounds for positive and negative updates. Specifically, it allows a higher clipping range (around 0.2–0.28) for upward updates, enabling the model to make stronger adjustments when high-reward responses are observed, while maintaining a lower bound for downward updates to preserve stability.

The core issue with GRPO's symmetric constraint is that it treats positive and negative gradients equally. This dampens the impact of high-reward samples because even beneficial updates are clipped aggressively, limiting the model's ability to strongly reinforce correct reasoning paths. As a result, GRPO often exhibits slow improvement in tasks where clear reward separation is rare. DAPO counters this by explicitly breaking the symmetry — high-reward trajectories are amplified through relaxed clipping, while low-reward or unstable updates remain tightly controlled. This can be seen as a targeted relaxation of PPO-style constraints, tailored for LLM fine-tuning where exploration and credit assignment are more critical than strict policy conservatism.

Dynamic Sampling: Filtering Out Uninformative Batches

Unlike GRPO, which processes all prompts uniformly, DAPO introduces a dynamic, information-aware sampling strategy. In GRPO, when all generated responses for a prompt receive similar rewards, the variance collapses, resulting in near-zero advantages and effectively producing zero-gradient batches. These batches contribute little to learning while still consuming computational resources.

DAPO incorporates an information-theoretic filtering mechanism that selectively focuses on prompt groups where reward variance is sufficiently high to provide a meaningful learning signal. Uninformative groups are skipped, improving the signal-to-noise ratio during training. This also introduces an implicit curriculum learning effect — as the model improves, simpler prompts naturally become less informative and are filtered out, allowing training to concentrate on harder, more discriminative examples.

This variance-based filtering is more principled than uniform sampling because higher variance implies greater entropy reduction potential and stronger gradients for policy improvement. In contrast, low-variance groups contribute mostly noise. By discarding them, DAPO effectively performs importance sampling over prompt groups, prioritizing those with the highest expected learning signal — making training both more stable and computationally efficient.

Token-Level Gradients: Precise Credit Assignment

GRPO computes policy gradients at the sequence level, where each generated response is assigned a single reward that is uniformly backpropagated across all tokens. This introduces a fundamental credit assignment problem — the model cannot distinguish which specific tokens or reasoning steps contributed to success or failure. Important tokens in long responses get diluted, and errors at specific steps are not precisely corrected.

DAPO shifts to a token-level policy gradient formulation, where each token contributes individually to the learning signal. Instead of treating the output as a monolithic unit, DAPO decomposes learning across the generation process, allowing it to capture step-wise correctness. This is particularly important in long chain reasoning tasks, where early tokens may be correct while later ones introduce errors, or vice versa.

This change also improves gradient alignment and reduces variance. In sequence-level training, gradients are noisy because the same reward is broadcast across all tokens, including irrelevant ones. Token-level gradients are more selective and better aligned with actual contributions. In effect, it shifts the learning paradigm from output-level supervision to process-level supervision — enabling models to learn not just which outputs are better, but which specific reasoning steps lead to better outcomes.

Soft Length Penalty: Controlling Verbosity Without Hard Cutoffs

Reinforcement learning based fine-tuning often suffers from a tendency toward overly long and verbose outputs, as models implicitly learn that longer responses may correlate with higher rewards. In GRPO, this is typically handled through external reward shaping or heuristics, which can be brittle and task-dependent.

DAPO addresses this more systematically by integrating a soft length penalty directly into the reward function. Instead of applying hard cutoffs, DAPO gradually reduces the reward for responses that exceed a certain length threshold and in extreme cases, fully cancels rewards for excessively long outputs. This ensures that verbosity is discouraged while still preserving useful reasoning content when longer responses are genuinely necessary.

The use of a gradual penalty avoids the pitfalls of hard constraints, which can truncate valid reasoning chains or introduce discontinuities in the optimization landscape. By allowing partial credit for moderately long responses while penalizing excessive verbosity, DAPO preserves meaningful gradients and avoids destabilizing updates — guiding the model towards concise yet complete reasoning.

When to Use DAPO vs GRPO

| Scenario | GRPO | DAPO |

|---|---|---|

| Math, coding, factual QA (clear correctness, consistent rewards) | Sufficient — strong signals, simple pipeline | Unnecessary complexity |

| Alignment, creative writing, dialogue (subjective, noisy rewards) | Struggles with zero-gradient batches and weak signals | Strong — dynamic sampling filters noise, decoupled clipping amplifies good outputs |

| Long-form generation | Verbosity risk — no built-in length control | Controlled — soft length penalty maintains information density |

| Multi-step reasoning | Coarse credit assignment dilutes token-level signals | Precise — token-level gradients assign credit per reasoning step |

| High reward variance | Uniform sampling wastes compute on uninformative batches | Efficient — variance-based filtering focuses on informative samples |

In short: GRPO works well when reward signals are stable, low-noise, and easily distinguishable — such as in mathematics and coding tasks with deterministic correctness checks. DAPO provides a more robust framework when learning signals are sparse, noisy, or require nuanced interpretation — including alignment, creative writing, and complex reasoning tasks where the additional mechanisms for filtering, clipping, and credit assignment translate into meaningful gains.

Key Takeaways

- GRPO's core limitations are structural. Zero-gradient batches, symmetric clipping, and sequence-level credit assignment are inherent to its design, not edge cases.

- DAPO's four innovations address distinct failure modes. Decoupled clipping handles exploration, dynamic sampling handles signal quality, token-level gradients handle credit assignment, and soft length penalties handle verbosity.

- Not every task needs DAPO. For objective tasks with clean rewards, GRPO's simplicity is an advantage. DAPO's complexity pays off primarily in subjective or noisy reward settings.

- Dynamic sampling creates an implicit curriculum. As the model improves, easy prompts are automatically filtered out, focusing training on progressively harder examples.

- Token-level optimization is the shift from judging outputs to judging reasoning. This is increasingly seen as essential for improving LLM reasoning capabilities.